Welcome to Day 23! Today, we’ll assess the effectiveness of AI in visual testing compared to non-AI visual testing methods. The use of AI to detect visual anomalies within graphical user interfaces (GUIs) has great promise. So, let’s explore the potential advantages and pitfalls of adopting an AI-assisted visual testing approach.

Task Steps

Let’s begin this investigation by selecting one of two options based on your current experience and access to visual testing tools:

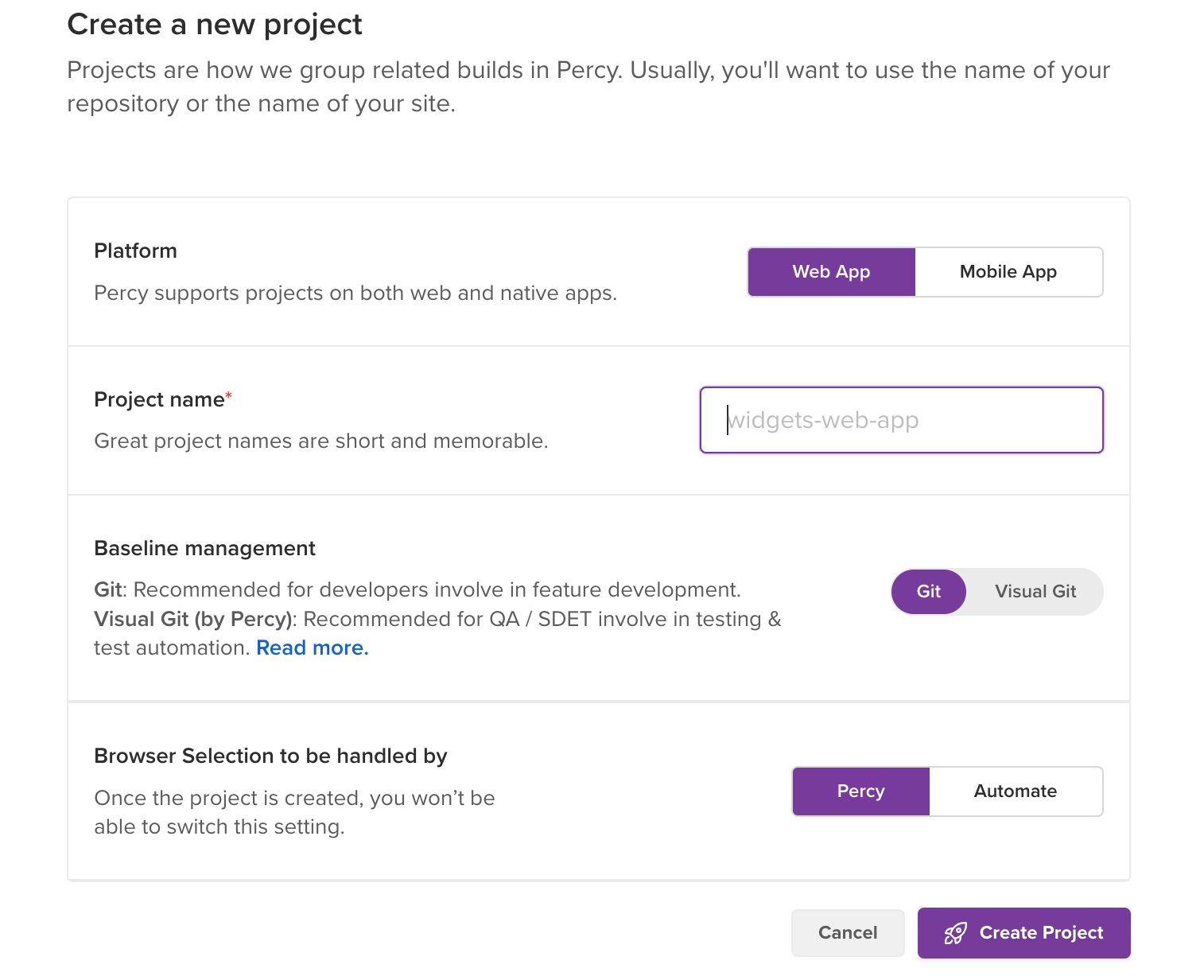

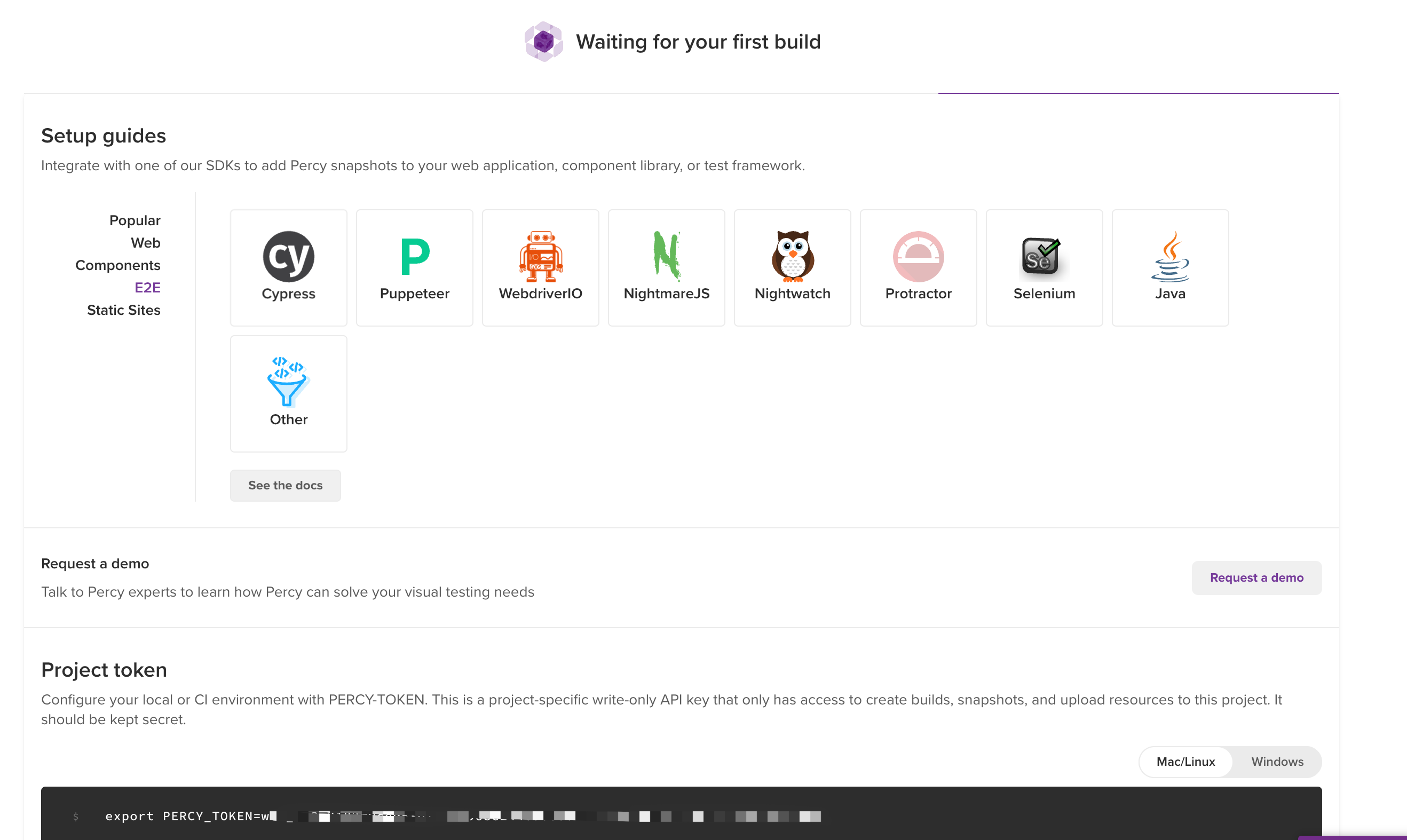

Option 1 - For those actively using or looking to get hands-on with visual testing tools

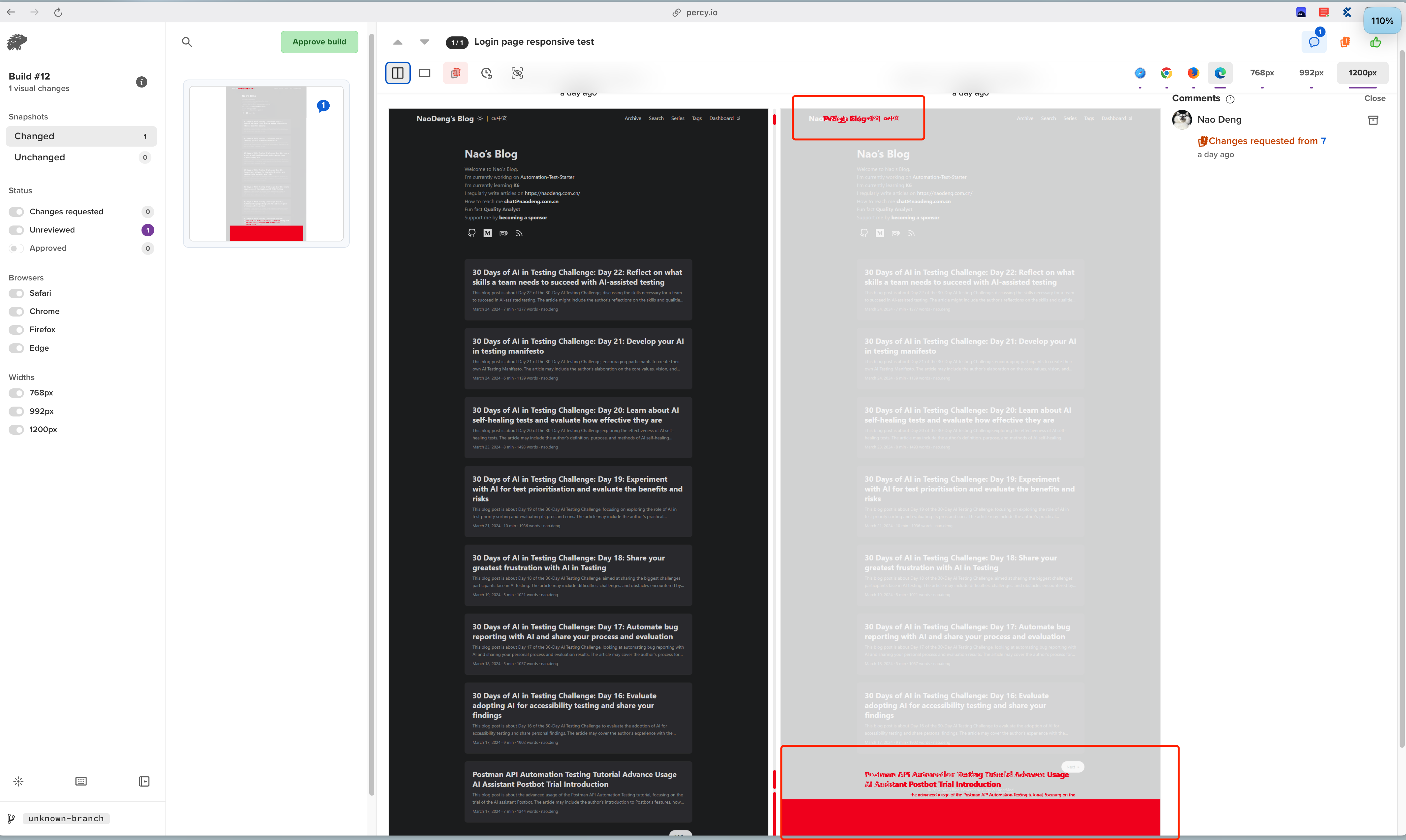

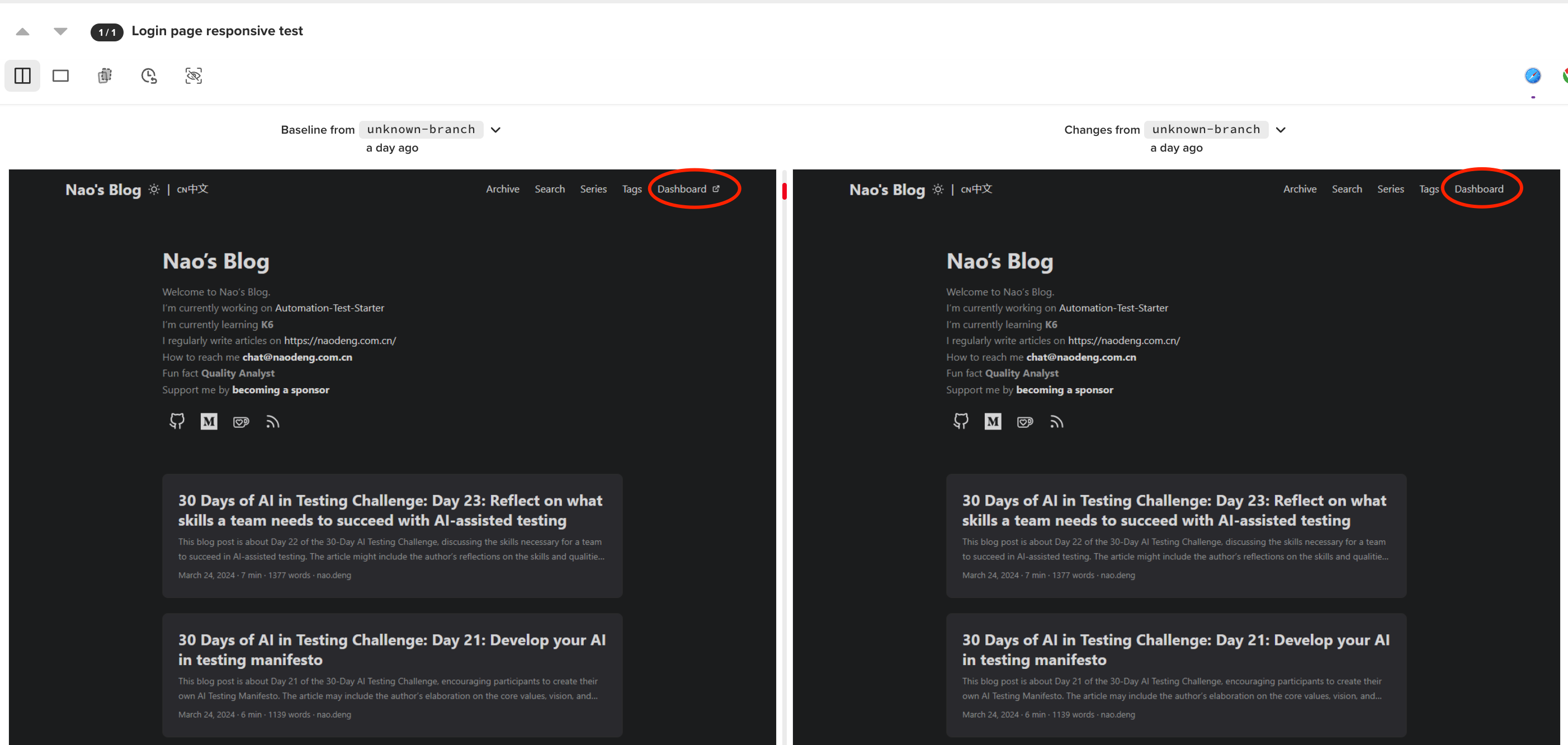

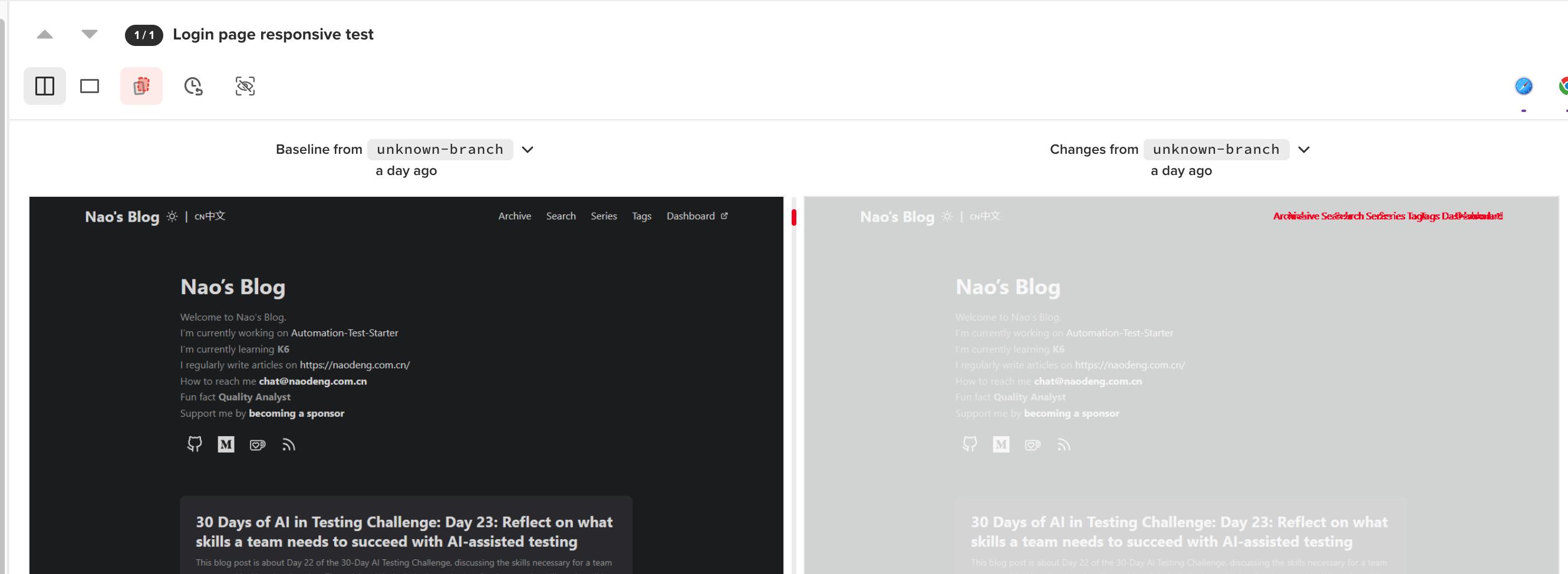

- Select a tool and examine the capabilities: Select a tool or platform that boasts AI-powered visual testing capabilities. Review the documentation or marketing materials to understand the AI approach and their anomaly detection claims.

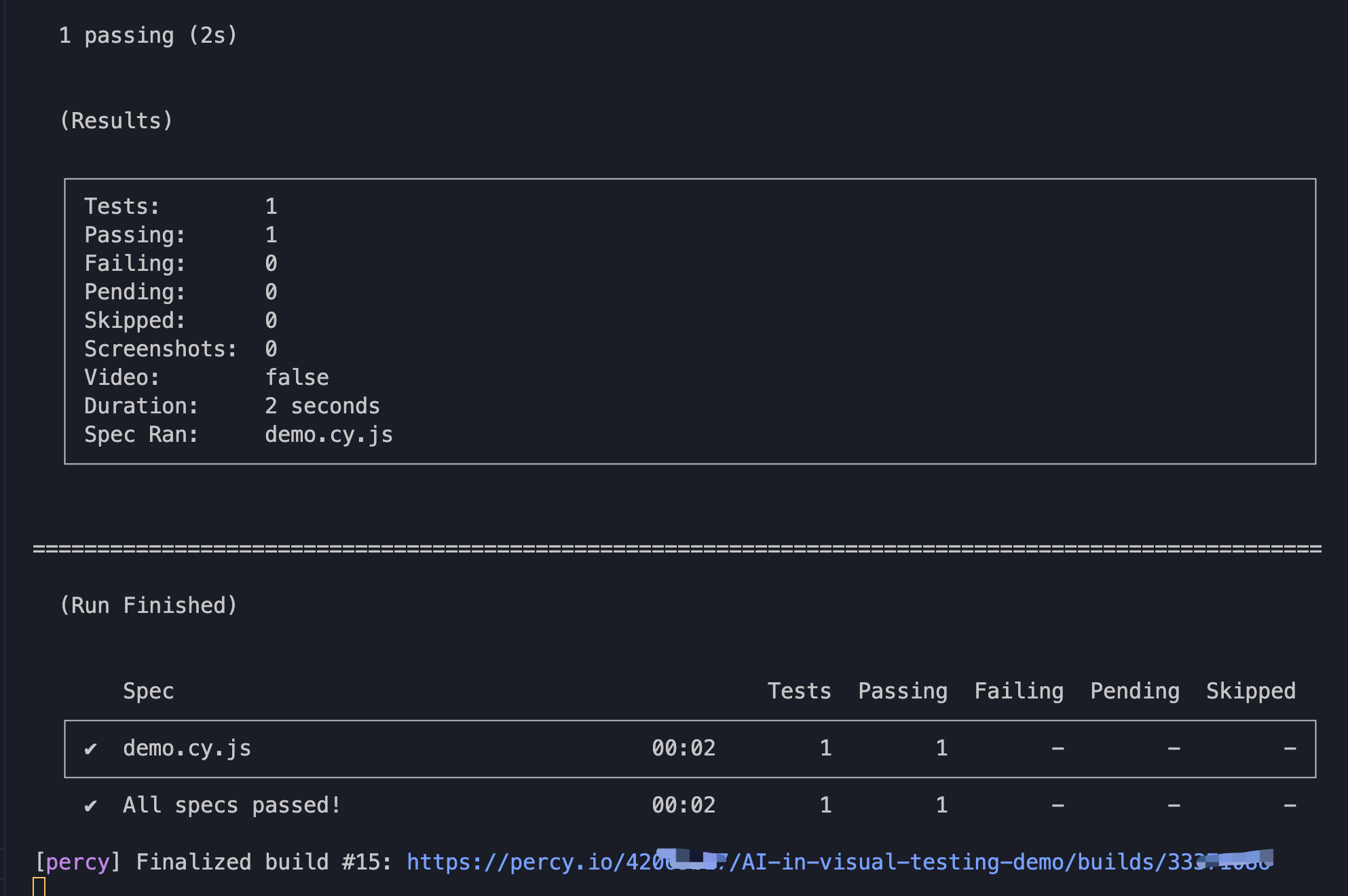

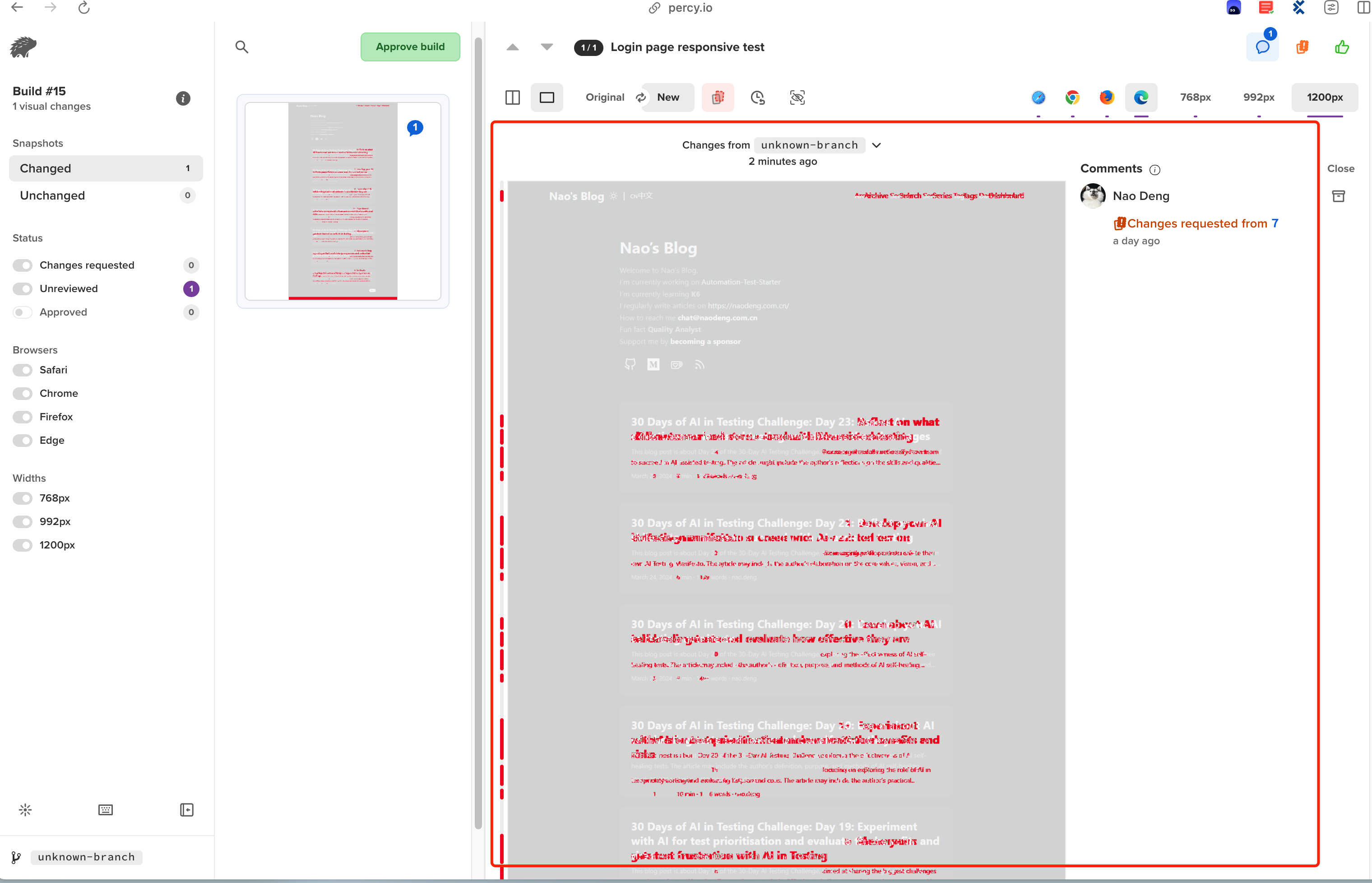

- Test the claims: Design a time-boxed test (e.g., 30 minutes) to evaluate one of the tool’s AI-powered visual testing capabilities. For instance, if it claims to detect layout changes, intentionally modify the GUI and see how well the tool identifies the anomaly.

- Consider failure scenarios: Assuming the tool performed well in your test, construct a scenario where you think it might fail to detect a visual anomaly.

- Share your findings: In reply to this post, share your insights on AI-powered visual testing. Consider including

- Which option you selected

- Which tool you selected and the AI visual testing capabilities it claimed

- Your findings from your timeboxed experiment

- The potential advantages and risks of using AI for visual testing.

- How likely you are to continue using the AI-powered visual testing tool.

Option 2 - For those new to visual testing or without access to tools.

- Research AI visual testing: Find resources (research papers, blog posts, documentation, video demos) discussing how AI is used for visual testing and GUI anomaly detection.

- Critique the AI approach: Try to identify the core benefits AI brings to visual testing and the techniques used by AI systems to analyse GUI images/screenshots and identify visual anomalies. Then hypothesise scenarios where an AI system might struggle to detect a visual anomaly.

- Assess if AI visual testing is for you: Consider whether an AI-powered visual testing solution would benefit your team based on the challenges you currently face.

- Share your findings: In reply to this post, share your insights on AI-powered visual testing. Consider including

- Which option you selected,

- A summary of what problems AI-powered visual testing claims to solve and how

- The potential advantages and risks of adopting an AI visual testing approach

- How likely you are to adopt AI-powered visual testing tools.

Why Take Part:

- Deepen your knowledge: Maintaining robust visual testing as UIs evolve can be challenging. This task helps you understand how AI could potentially streamline this process.

- Develop a critical mindset: When evaluating new testing tools or approaches, it’s crucial to think critically about their capabilities, limitations, and impacts on your team. Today’s task hones that skill.