My Day 26 Task

About Research the Carbon Footprint of AI

I quickly read the article We’re getting a better idea of AI’s true carbon footprintrecommended in the challenge assignment.

Hugging Face estimates AI model’s carbon footprint

-

Emissions Estimate: BLOOM’s training led to 25 metric tons of CO2 emissions.

Emissions Estimate: BLOOM’s training led to 25 metric tons of CO2 emissions.

-

Real-world Impact: AI models’ environmental impact needs further understanding.

Real-world Impact: AI models’ environmental impact needs further understanding.

-

• Call to Action: Encouraging more efficient AI research and development.

-

Summary of the article

The article from MIT Technology Review discusses Hugging Face’s initiative to more accurately calculate the carbon footprint of large language models (LLMs) by considering their entire lifecycle, not just the training phase. Hugging Face applied this methodology to its own model, BLOOM, finding its carbon emissions were significantly lower compared to other LLMs, partly due to using nuclear energy for training. The research emphasizes the importance of considering the broader impact of AI on the environment, including hardware manufacture and operational emissions, and suggests shifting towards more efficient research practices to reduce carbon footprints.

About Explore Reduction Strategies

I quickly read the article Why Data And AI Must Become More Sustainablerecommended in the challenge assignment.

Data and AI’s environmental impact is a growing concern

-

Problem: Data and AI’s carbon footprint is a major concern.

Problem: Data and AI’s carbon footprint is a major concern.

-

Suggestions: Tools and best practices to mitigate environmental impact.

Suggestions: Tools and best practices to mitigate environmental impact.

-

Impact: AI’s energy consumption threatens climate change progress.

Impact: AI’s energy consumption threatens climate change progress.

-

Summary of the article

The article “Green Intelligence: Why Data And AI Must Become More Sustainable” emphasizes the environmental impact of data and AI technologies, highlighting the growing concerns about their carbon footprint and energy consumption. It discusses the exponential growth in data and AI deployment, exacerbated by the COVID-19 pandemic, leading to significant energy demands and environmental costs. The piece stresses the need for enterprises to address the contribution of data storage and AI to greenhouse gas emissions. To tackle AI’s sustainability impact, the article suggests measures like improving carbon accounting, estimating carbon footprints of AI models, optimizing data storage locations, increasing transparency, and following energy-efficient practices like Google’s “4M” best practices. It also calls for a shift towards new AI paradigms that prioritize environmental sustainability to combat climate change effectively. The article underscores the importance of reforming AI research agendas and enhancing transparency to mitigate the environmental impact of AI and data technologies.

About Share Your Findings

I think the development of AI definitely has a large impact on the environment, and there is a carbon footprint associated with both the research and use of AI.

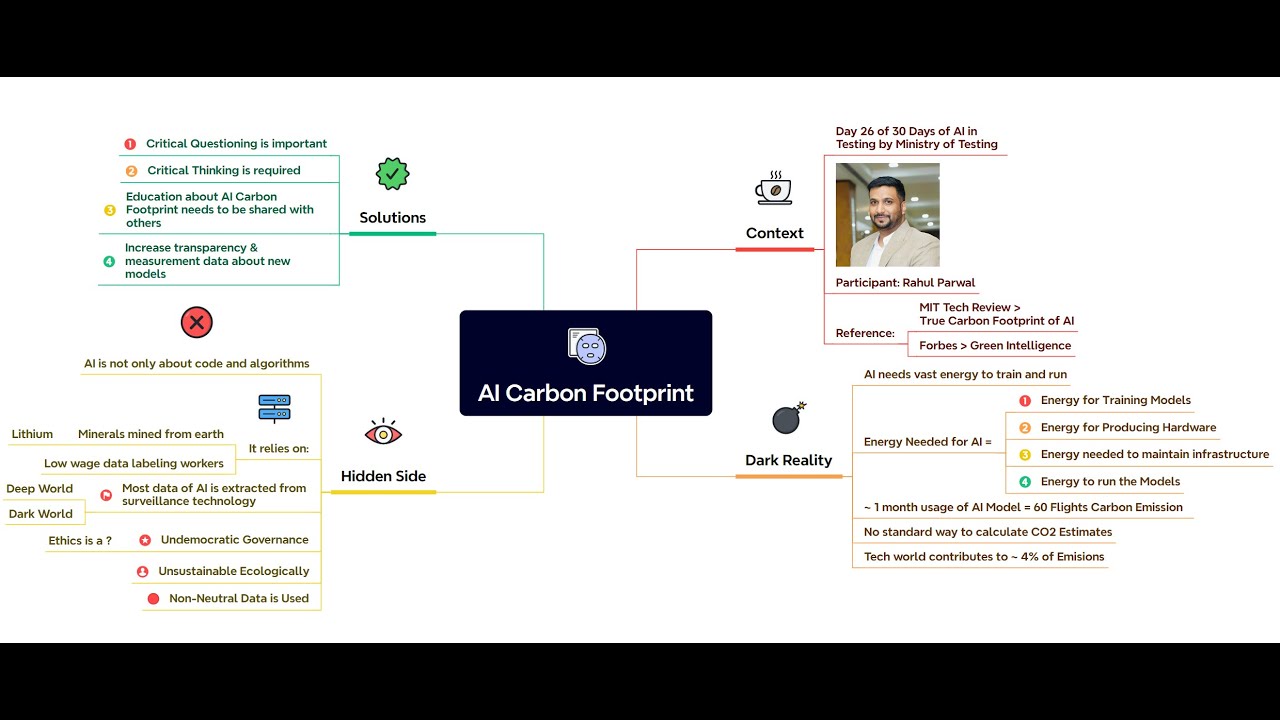

The Carbon Footprint of AI

The development and application of AI technology are rapidly expanding on a global scale. With the continuous advancement of AI technology, especially the emergence of large language models (LLMs) and other complex AI systems, there is growing concern about their energy consumption and carbon emissions. The training and operation of these systems require substantial computational resources, which directly relate to energy consumption and associated carbon emissions.

Discussion and Training of LLMs

The training of AI systems like large language models typically requires significant electrical power and hardware resources. These resources’ consumption comes not only from the energy required for computation itself but also from the cooling systems needed to maintain the hardware devices’ normal operation. Researchers are exploring ways to reduce energy consumption in these processes, such as by optimizing algorithms, using more efficient hardware, and improving data center energy management.

Operating AI Assistants

The operation of AI assistants also requires energy support, especially in cloud services. These services typically rely on data centers, whose energy consumption and carbon emissions are an integral part of studying the carbon footprint of AI. To reduce these impacts, data centers are adopting renewable energy sources and more efficient cooling technologies.

Storing Large Amounts of Data

Data storage is also an essential component of the carbon footprint of AI systems. As the volume of data increases, the energy required to store and back up this data is also rising. Researchers are looking for more efficient data storage solutions and ways to reduce energy consumption by minimizing data redundancy and optimizing storage structures.

Influencing Factors

-

Hardware Requirements: The hardware requirements of AI systems directly affect their energy consumption. Using more energy-efficient processors and storage devices can reduce the carbon footprint.

-

Data Center Operations: The energy efficiency of data centers, the proportion of renewable energy used, and the efficiency of cooling systems are all critical factors affecting the carbon footprint of AI.

-

Model Optimization Methods: By optimizing AI models, such as reducing the number of parameters and using techniques like knowledge distillation, it is possible to maintain performance while reducing energy consumption during training and inference processes.

Conclusion

The carbon footprint of artificial intelligence is a multidimensional issue that needs to be considered comprehensively from various aspects, including hardware, data center operations, data storage, and model optimization. As awareness of the environmental impact of AI technology grows, future developments will place greater emphasis on sustainability and efficiency to reduce the negative impact on the environment.