I believe the underlying AI models (GPT3, or Bloom an opensource alternative) which are used to build tools like ChatGPT would have the larger impact, that comes in waves, and become integrated across various products.

Test data is the most obvious use case, followed by test script writing and editing.

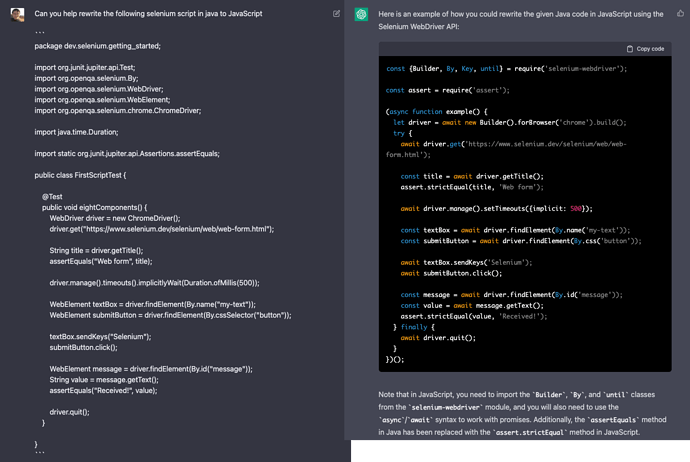

For example, it is able to translate selenium code from java to javascript

It may not be 100% accurate, and it may need. some human help to fix and iterate… but it did the heavy lifting.

Getting the AI to accurately generate test scripts, and edit them is something I am working on within my company. And from my point of view, is my end goal, where you basically chat back and forth with a bot on how to write and improve the test scripts. (Like the above code conversion, but on your entire test code)

Its role will be more of an assistant alongside us human testers, as from my current experiments, it is very flawed in various ways. And does not understand some wierd things, especially when certain bugs are features. Basically the more subjective feedback parts.

To quote a co-worker, is like hiring your own intern. They will make mistakes, but they are still useful.

Also i do agree with @bethtesterleeds - this technology does have serious ethical implementation, outside the testing space, when mixed into the “privacy” or “right of an artist” areas. Though that might be another topic all together (calling it a problem, might be an understatement).

PS: it is very difficult to predict new frontier tech changes, so the above is just my guess, I may end up being completely wrong - im also a startup founder who works on test automation tools, and AI, so I do have a conflict of interest in this topic.