Only ten more days to go on this challenge, and today we are going to examine the claims about Self-Healing Testings. The idea of Self-Healing Tests was one of the early claims for the use of AI in Testing, but there are three key questions we want to answer:

- What does Self-Healing Tests really mean?

- What are the risks of Self-Healing Tests?

- Is this a useful feature?

We know that not everyone is interested in learning new automation tools, so similar to yesterday’s task, there are two options and you are free to pick one (or both).

Task Steps

Option 1: This option is for you if you currently use a tool that claims Self Healing Tests or are interested in a deep dive into Self Healing Tests and have time to learn a new tool. For this option, the steps are:

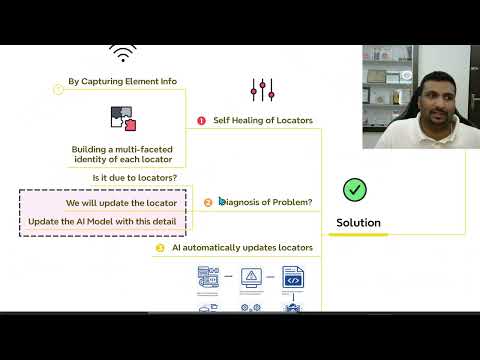

- What types of problems does your tool claim to heal? Read the documentation for your selected tool to understand what the tool means by Self-Healing Tests. Try to understand the types of test issues that the tool claims to heal and how this mechanism works.

- Test one of their claims: Design a 20 minute time-boxed test of one of the Self-Healing capabilities of the tool. Run the test and evaluate how well you think the Self-Healing mechanism performed. Some ideas are:

- If the tool claims to detect changed element locators, you could change the locator in a test so that the test wil fail, then run the tool and check how well the tool heals the failing test.

- If the tool claims to correct the sequencing of actions, swap two parts of a test so that it fails, then run the tool and check how well the tool heals the failing test.

- How might this feature fail? Based on the claim you were testing and assuming the self-healing was successful, how might the self-healing fail? Can you construct a scenario where it does fail?

Option 2: This option is for you if you are interested in finding out more about Self Healing Tests but don’t have time to learn a new tool. For this option, the steps are:

- Find and read an article or paper that discusses Self-Healing Tests: This could be a research paper, blog post or vendor documentation that deals with the specifics of Self-Healing Tests.

- Try to understand the types of issues with tests that the tool claims to heal.

- If possible, uncover how the issues are detected and resolved.

- How valuable is a feature like this to your team? Consider the challenges your team faces and whether Self-Healing Tests are valuable to your team.

- How might this fail? Based on your reading, how might this Self-Healing fail in a way that matters? For example, could it heal a test in a way that results in the purpose of the test changing from what you originally intended?

Share Your Insights: Regardless of which investigative option you choose, respond to this post with your insights and share:

- Which option you chose.

- What your perceptions are of Self-Healing Tests (what problem does it solve and how).

- The ways in which Self-Healing Tests might benefit or fail your team.

- How likely you are to use (or continue to use) tools with this feature.

Why Take Part

- Deepen your understanding of Self-Healing Tests: Maintaining tests through new iterations of a product can be challenging, so tooling that can reduce this is valuable. By taking part in this test task, you are developing a sense of what Self-Healing Tests actually means and how they can help your team.

- Improve your critical thinking about vendor claims: When selecting tools to support testing, we are often faced with many fantastic sounding claims. This task allows you to think critically about the claims being made, their limitations, and how they might impact your team.